Published online Aug 27, 2025. doi: 10.4240/wjgs.v17.i8.109463

Revised: May 19, 2025

Accepted: June 13, 2025

Published online: August 27, 2025

Processing time: 105 Days and 17.9 Hours

Artificial intelligence (AI) is gaining widespread traction in surgical disciplines, particularly in gastrointestinal (GI) surgery, where it offers opportunities to en

To comprehensively evaluate current AI applications in GI surgery, highlighting its role in preoperative planning, intraoperative guidance, postoperative moni

This systematic review was conducted in accordance with PRISMA guidelines. We searched the Web of Science Core Collection through March 31, 2025 using the terms “artificial intelligence” AND “gastrointestinal surgery”. Inclusion criteria: Original, English-language, full-text articles indexed under the “Surgery” ca

The included studies demonstrated that AI has superior performance compared to traditional clinical tools in areas such as risk prediction, lesion detection, nerve identification, and complication forecasting. Notably, convolutional neural net

AI is transforming GI surgery from preoperative risk assessment to postoperative care and training. While many tools now match or exceed expert-level performance, successful clinical adoption requires transparent, validated models that seamlessly integrate into surgical workflows. With continued multidisciplinary collaboration, AI is positioned to become a trusted companion in surgical practice.

Core Tip: This systematic review highlights how artificial intelligence (AI) is rapidly reshaping gastrointestinal surgery across preoperative, intraoperative, and postoperative stages. By synthesizing findings from 45 original studies, this review identifies AI’s strengths in enhancing surgical precision, predicting complications, improving diagnostic accuracy, and supporting surgical education. It emphasizes that while many AI tools now match or exceed clinician performance, challenges remain in validation, integration, and interpretability. This review offers a comprehensive roadmap for clinicians and researchers seeking to responsibly implement AI in gastrointestinal surgical practice.

- Citation: Tasci B, Dogan S, Tuncer T. Artificial intelligence in gastrointestinal surgery: A systematic review. World J Gastrointest Surg 2025; 17(8): 109463

- URL: https://www.wjgnet.com/1948-9366/full/v17/i8/109463.htm

- DOI: https://dx.doi.org/10.4240/wjgs.v17.i8.109463

Gastrointestinal (GI) surgery poses unique challenges due to the complex anatomy of the alimentary tract and the high variability of tumor location, vascular supply and tissue perfusion. Recent advances in artificial intelligence (AI) have begun to address these challenges by providing real-time image enhancement, predictive modeling, and decision support. AI-driven navigation systems that use indocyanine green angiography or hyperspectral imaging enhance intraoperative tissue delineation and have reduced complication rates in procedures like total mesorectal excision[1,2]. Machine learning algorithms such as support vector machines and random forest models facilitate personalized risk evaluation for complications prior to surgery, including anastomotic leakage following rectal cancer resection, and have shown enhanced precision compared to conventional clinical staging in forecasting lymph node involvement in early colorectal cancer[3,4]. Collectively, these advancements demonstrate that AI is poised to become an essential instrument in enhancing the safety and effectiveness of GI surgery[5]. AI facilitates patient care postoperatively by allowing for the prompt identification of issues such as surgery site infections or leakage. By continuously analyzing clinical data, AI systems can flag warning signs sooner, allowing for timely interventions and improved recovery outcomes[6,7]. Mo

Therefore, we aimed to summarize current evidence regarding the performance, validation status, and clinical applicability of AI models in preoperative, intraoperative, and postoperative phases of GI surgery. Our aims were to: (1) Quantify AI performance metrics in GI surgical tasks; (2) Evaluate methodological quality, including external validation and dataset standardization; and (3) Identify barriers and facilitators to clinical integration. By addressing this gap, this systematic review will provide surgeons and researchers with a clear roadmap for translating AI innovations into safe and effective clinical practice.

This systematic review was executed in compliance with the PRISMA criteria, which provide a recognized framework for guaranteeing transparency and rigor in systematic reviews. To present a comprehensive summary of the existing literature, an extensive search was conducted utilizing the Web of Science (WoS) Core Collection database, encompassing all accessible papers until March 2025. There were no limitations on publication year, enabling the tracking of the evolution of this topic throughout time. The search method included the terms “artificial intelligence” and “gas

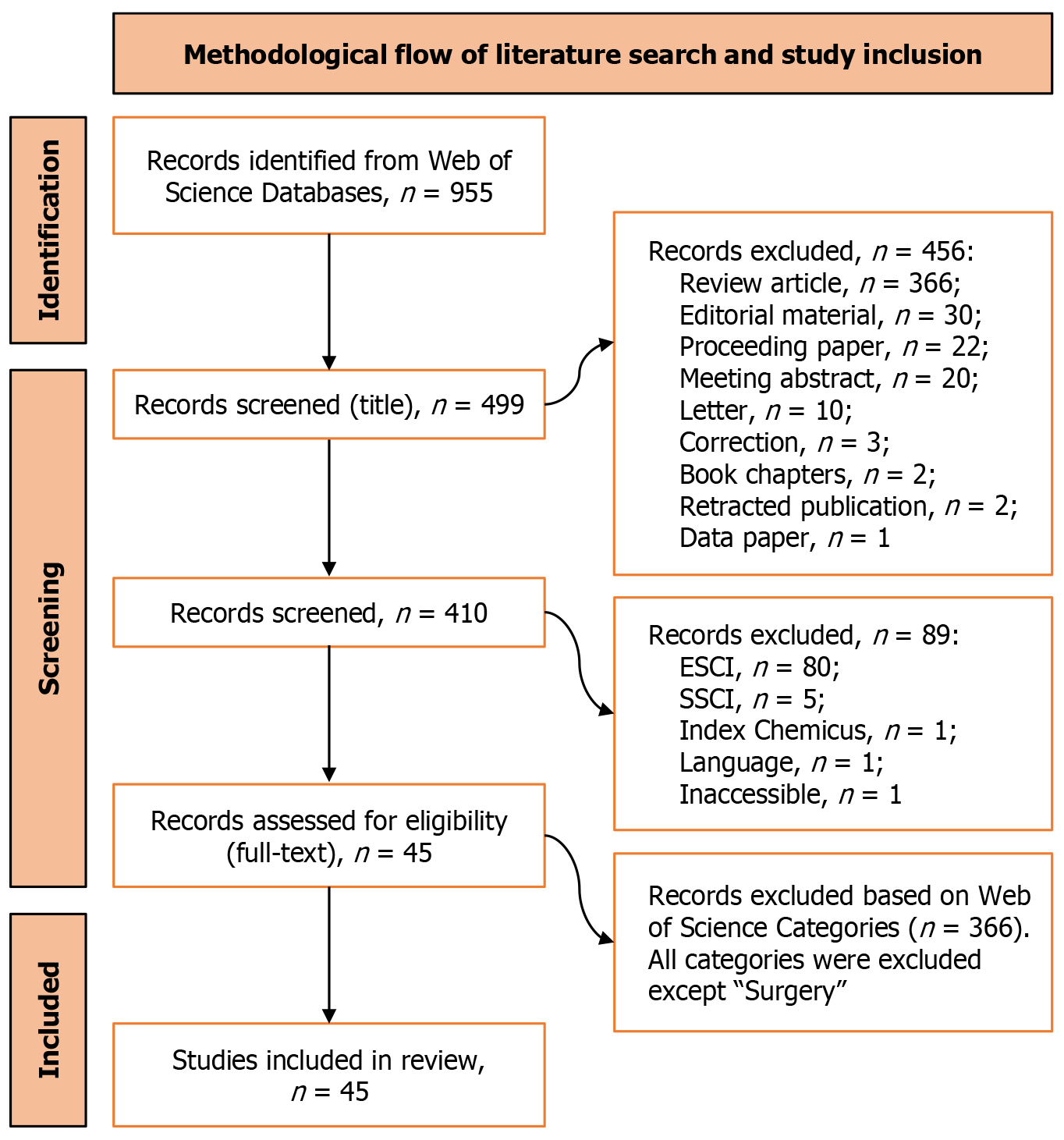

From each included study, key information was systematically extracted, including: Author(s) and year of publication, country of origin, surgical area and purpose of AI implementation, type of AI model used, clinical context or target pathology, and reported performance metrics. Due to significant variation in study designs, AI models, and how results were reported, a meta-analysis was not feasible. Instead, a qualitative, thematic approach was adopted to synthesize the findings. Results were grouped and analyzed according to thematic categories reflecting different stages and functions of AI application within GI surgery. To provide transparency and traceability, Figure 1 illustrates the full selection process from the initial database search to the final inclusion of studies along with specific reasons for exclusion at each stage, in accordance with PRISMA standards.

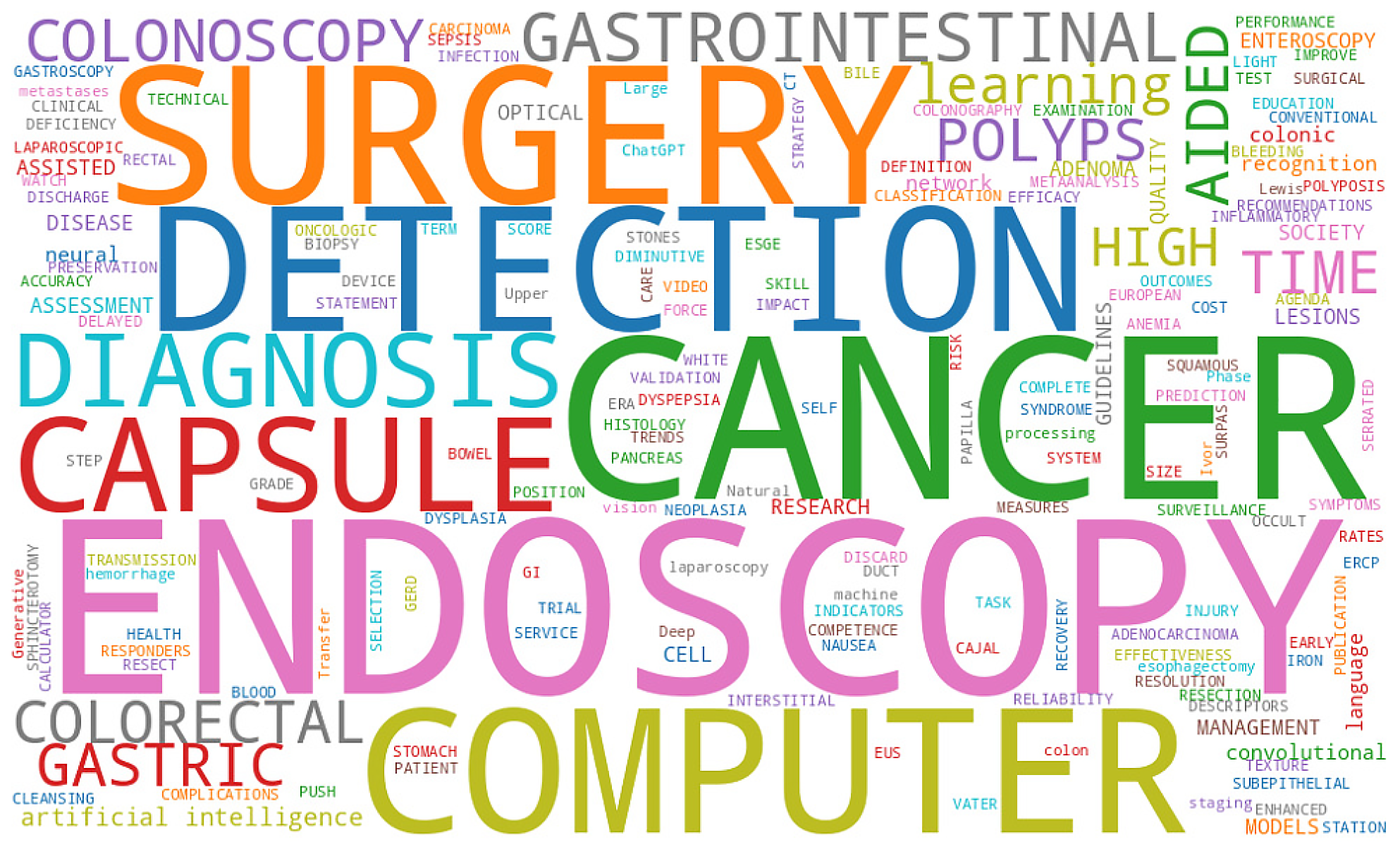

The word cloud in Figure 2 highlights the dominant research themes in AI applications within GI surgery. Terms such as “Cancer”, “Endoscopy”, “Surgery”, “Detection”, “Computer”, and “Diagnosis” appeared most prominently, indicating a strong focus on cancer detection, preoperative planning, and surgical decision support using endoscopic imaging. Additionally, keywords like “Capsule”, “Colonoscopy”, and “Polyp” suggest a notable emphasis on non-invasive screening techniques. Overall, this visual representation provides a comprehensive overview of the key concepts and clinical areas addressed in the reviewed literature.

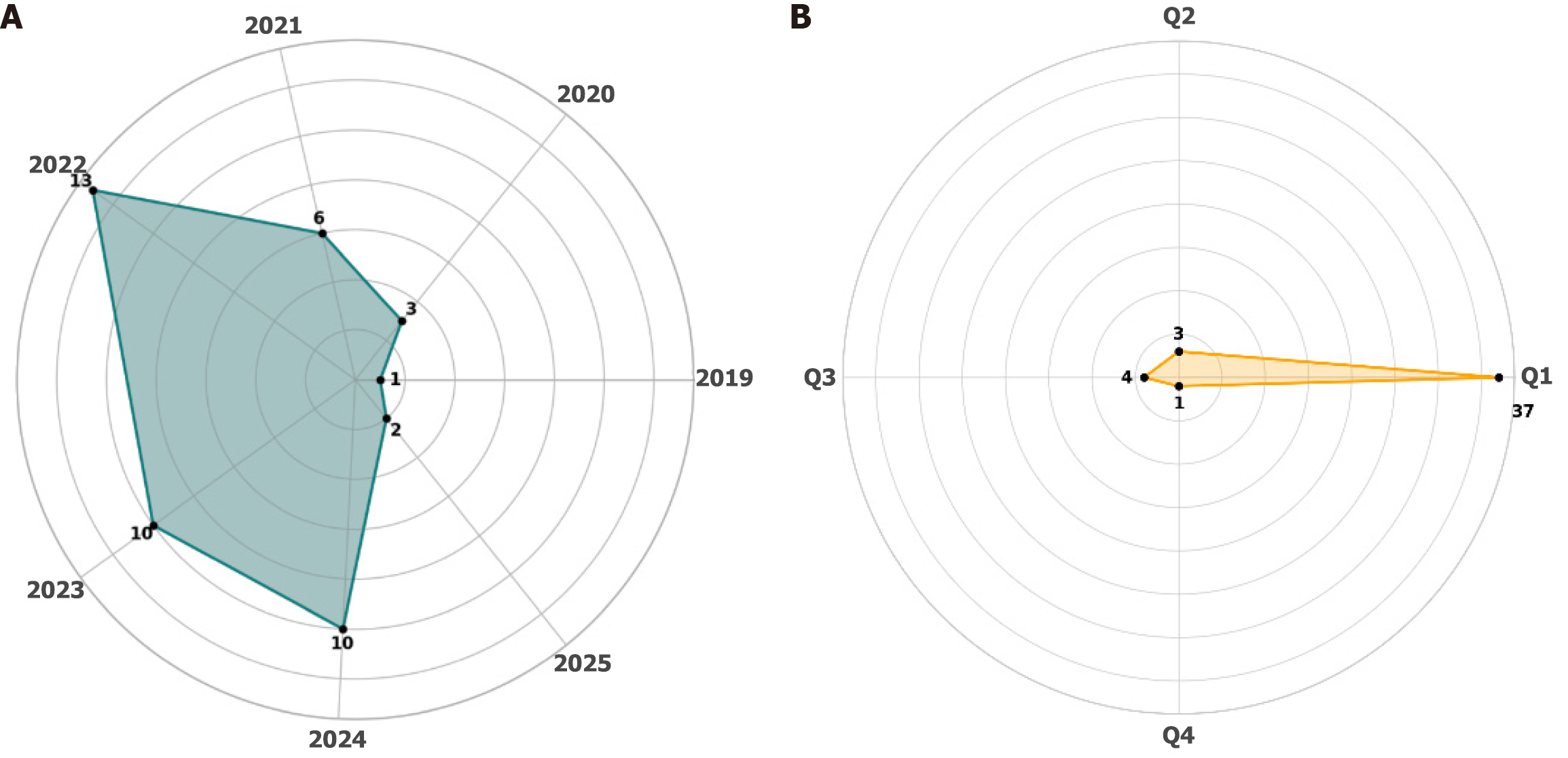

As seen in Figure 3A, the number of studies on AI applications in GI surgery has significantly increased in recent years, peaking in 2022. This trend reflects the growing research interest and clinical adoption of AI-driven techniques in surgical disciplines. Figure 3B illustrates the distribution of the selected articles across journal quartiles, with a strong concentration in Q1 journals (n = 37), indicating the high impact and academic quality of the studies included in this study. Only a small number of studies appeared in Q2 (n = 3), Q3 (n = 4), and Q4 (n = 1) journals. This distribution supports the scientific relevance and credibility of the analyzed literature.

The analysis of publication venues (Table 1) shows that research on AI applications in GI surgery is primarily concentrated in a few high impact journals. Surgical endoscopy and other interventional techniques (n = 14), endoscopy (n = 11), and digestive endoscopy (n = 7) collectively account for the majority of included studies, emphasizing the central role of endoscopy-driven research in this field. The remaining publications are distributed across various journals such as Annals of Surgery, Colorectal Disease, and International Journal of Surgery, each contributing a single study. This distribution reflects both the interdisciplinary nature and the growing clinical interest in AI-assisted techniques within minimally invasive and image-guided surgical practices.

| Journal title | Number of publications |

| Surgical Endoscopy and Other Interventional Techniques | 14 |

| Endoscopy | 11 |

| Digestive Endoscopy | 7 |

| Annals of Surgery | 1 |

| ANZ Journal of Surgery | 1 |

| Cirugía y Cirujanos | 1 |

| Colorectal Disease | 1 |

| Diseases of the Colon & Rectum | 1 |

| International Journal of Computer Assisted Radiology and Surgery | 1 |

| International Journal of Surgery | 1 |

| Journal of Gastrointestinal Surgery | 1 |

| Journal of Surgical Research | 1 |

| Obesity Surgery | 1 |

| Surgery | 1 |

| Surgical Laparoscopy Endoscopy & Percutaneous Techniques | 1 |

Preoperative planning in GI surgery aims to reduce complications, improve operative precision, and optimize patient selection. Embedding AI in the preoperative phase has produced risk-prediction models that outperform conventional scoring systems in both diagnostic accuracy and clinical relevance. Several studies illustrate how AI-based algorithms accurately forecast choledocholithiasis, colorectal neoplasia, and postoperative complications, while also improving anatomical mapping to more precisely guide surgeons during operative planning. Table 2 shows that AI has quickly become an indispensable tool for preoperative planning and risk stratification in GI surgery. Across studies, AI models ranging from image-based deep learning networks to classic machine-learning risk scores consistently outperform traditional methods in speed, accuracy and personalization. They not only predict conditions such as choledocholithiasis or colorectal neoplasia and flag patients at high risk for complications, but also improve anatomical mapping to guide surgeons before the first incision. In sum, integrating AI into the preoperative workflow goes beyond a mere technical upgrade. It represents a strategic advance toward safer and more tailored surgical care.

| Ref. | Focus area | AI method/model | Key outcome | Number of data points | Results |

| Galvis-García et al[13], 2023 | Colorectal polyp detection and classification | Deep learning, CNN, CAD (CADe/CADx) | Increased adenoma and polyp detection rates using AI assisted colonoscopy in real time | 1038 patients (RCT), 8641 images (CNN study), 466 polyps (CADx), 238 lesions (endocytoscopy) | Sensitivity up to 96.5%, specificity up to 93%, accuracy up to 96.4%, F1 score approximately 94%, NPV up to 99.6% |

| Zhang et al[14], 2023 | Diagnosis of choledocholithiasis in gallstone patients | Machine learning (7 models), AI (ModelArts) | Developed and validated AI model with high diagnostic accuracy for CBD stones prediction | 1199 patients (681 with CBD stones) | ModelArts AI: Accuracy 0.97, recall 097, precision 0.971, F1 score 097; machine learning AUCs: 0.77-0.81 |

| Ahmad et al[15], 2022 | Detection of subtle and advanced colorectal neoplasia | Deep learning (ResNet 101 CNN) | High sensitivity for detecting flat lesions, sessile serrated lesions, and advanced colorectal polyps | 173 polyps, 35114 polyp positive frames, 634988 polyp negative frames across multiple datasets | Per polyp sensitivity: 100%, 98.9%, and 79.5% (in subtle set); F1 score: Up to 87.9%; CNN outperformed expert and trainee endoscopists in detection speed and accuracy |

| Lei et al[16], 2023 | Polyp detection via colon capsule endoscopy | Deep learning (CNN, AiSPEED™) | Feasibility and diagnostic accuracy of AI assisted reading compared to clinician interpretation | Target: 674 patients (597 needed for power; both prospective and retrospective recruitment) | Sensitivity/specificity of AI to be compared to clinician standard; exact results pending study is ongoing |

| Eckhoff et al[17], 2023 | Surgical phase recognition for Ivor-Lewis esophagectomy | CNN + LSTM (TEsoNet), transfer learning | Demonstrated feasibility of knowledge transfer from sleeve gastrectomy to esophagectomy with moderate accuracy | 60 sleeve gastrectomy videos, 40 esophagectomy videos (used in combinations across 5 experiments) | Single procedure accuracy: 87.7% (sleeve). Transfer learning: 23.4% overall (4 overlapping phases: 58.6%). Co training max accuracy: 40.8% |

| Blum et al[18], 2024 | Prediction of choledocholithiasis | Logistic regression, RF, XGBoost, KNN; ensemble model | Machine learning models can outperform ASGE guidelines in predicting choledocholithiasis risk using pre MRCP data | 222 patients | AUROC: 0.83 (RF), accuracy: 0.81 (ensemble), sensitivity: Up to 0.94, F1 score: Up to 0.82 |

| Axon[19], 2020 | Evolution and future of digestive endoscopy | Conceptual AI (future prediction) | Highlights AI’s potential to surpass expert level diagnostic accuracy and support real time treatment decisions | Predicts that AI will revolutionize diagnosis and treatment in endoscopy with real time support tools | |

| Hsu et al[20], 2023 | Predicting postoperative GIB after bariatric surgery | RF, XGBoost, NNs | Machine learning models outperform logistic regression in predicting GIB, aiding clinical decision making | 159959 patients (632 with GIB) | RF AUROC: 0.764, sensitivity: 75.4%, specificity: 70.0%; XGBoost AUROC: 0.746; NN AUROC: 0.741; logistic regression AUROC: 0.709 |

| Athanasiadis et al[21], 2025 | Accuracy of self-assessment in laparoscopic cholecystectomy (CVS quality) | Surgeons frequently overestimate CVS performance; self-assessment alone is insufficient | 25 surgeons enrolled, 13 submitted 1 video, 4 submitted 2 videos | No surgeon achieved adequate CVS per expert review; significant discrepancy between self and expert ratings on Strasberg scale | |

| Bisschops et al[22], 2019 | Advanced endoscopic imaging for colorectal neoplasia | Guidance on when to use advanced imaging (HD WLE, CE, NBI, etc.) for detection and differentiation of colorectal lesions | AI suggested for future use if validated; various imaging techniques reviewed for ADR, miss rates, lesion detection | ||

| Han et al[23], 2025 | Intraoperative recognition of PAN during total mesorectal excision | DeepLabv3+ with ResNet50 backbone | AI model (AINS) achieved real time neuro recognition of PAN, aiding nerve preservation during rectal cancer surgery | 1780 images (1424 training, 356 validation) | Accuracy: 0.9609, precision: 0.7494, recall: 0.6587, F1 score: 0.7011; AI outperformed surgeons (F1: 0.4568) and operated faster (3 minutes vs 25 minutes) |

The integration of AI into the operating room and endoscopy suite represents a major advance in GI surgery. By providing real-time guidance for anatomical identification and lesion characterization, these tools reduce the cognitive load on surgeons in complex cases and enhance patient safety. Table 3 summarizes recent examples across laparoscopy, white light endoscopy, and endoscopic ultrasonography that employ convolutional neural networks, object-detection frameworks like YOLOv5/YOLOv8, and segmentation networks such as DeepLabv3+. Most of these systems are designed for real-time or near real-time use and have been evaluated against expert performance or clinical outcomes, reflecting their growing reliability in the surgical setting.

| Ref. | Surgical context | AI method/model | Guidance function | Data type/source | Real time capability | Performance metrics |

| Sato et al[24], 2022 | Thoracoscopic esophagectomy | DeepLabv3+ (CNN based semantic segmentation) | Recurrent laryngeal nerve identification and navigation | 3000 annotated intraoperative images from 20 videos (train/val) + 40 test images from 8 other videos | Yes | Dice: AI 0.58, expert 0.62, general surgeons 0.47 |

| Niikura et al[25], 2022 | Upper GI endoscopy | Single shot multibox detector (CNN) | Detection of gastric cancer in endoscopic images | 23892 white light images from 500 patients (1:1 matched to expert endoscopists) | No | Per patient: 100%, per image: 99.87%, IOU: 0.842 |

| Yang et al[26], 2022 | EUS for subepithelial lesions | ResNet 50 (CNN based deep learning) | Differentiation of gastrointestinal stromal tumors and leiomyomas using EUS | 10439 EUS images from 752 patients (multicenter); 132 prospective patients for clinical validation | Yes | AUC: 0.986 (internal), 0.642 (external), accuracy: 96.2% |

| Schnelldorfer et al[27], 2024 | Staging laparoscopy | YOLOv5 (detection), ensemble ResNet18 CNN (classification) | Identification and classification of peritoneal surface metastases | 4287 lesions from 132 patients (365 biopsied lesions; 3650 image patches) | No | AUC PR: 0.69, AUROC: 0.78, accuracy: 78% |

| Guo et al[28], 2021 | GI endoscopy (multi lesion) | Deep CNN (ResNet 50 with TTA and transfer learning) | Detecting four lesion categories to support endoscopic diagnosis | 327121 WLI images for training; 33959 images in validation from 1734 cases | No (0.05 seconds/image) | Sensitivity: 88.3%, specificity: 90.3%, accuracy: 89.7% |

| Houwen et al[29], 2022 | Colonoscopy | Defines competence standards (SODA) for AI or endoscopists in optical diagnosis | Simulation studies + systematic literature review; ESGE Delphi consensus panel | Yes | Sensitivity ≥ 90%, specificity ≥ 80% (leave in situ); ≥ 80% both (resect and discard) | |

| Tatar and Çubukçu[30], 2024 | Colonoscopy | YOLOv8 based CNN (ColoNet) | Identification of neoplastic/premalignant/malignant lesions; biopsy decision support | 1760 colonoscopy images (306 patients) for training/validation; 91 external images for real time testing | Yes | mAP50: 0.832, accuracy: 82.4%, sensitivity: 70.7%, specificity: 92.0% |

The studies in Table 3 highlight the versatility and expanding role of AI in intraoperative GI procedures. Whether assisting with fine anatomical recognition (e.g., nerves, tumors) or automating detection tasks in endoscopy, AI systems are increasingly achieving or surpassing expert level accuracy. Nevertheless, challenges remain, particularly in ensuring real time compatibility, external validation, and cross platform generalizability. The consistent inclusion of performance metrics and rigorous validation, as demonstrated by these studies, is critical to fostering trust and regulatory approval in the surgical AI ecosystem.

AI is swiftly becoming an essential instrument for the early detection and forecasting of postoperative problems, often before their clinical manifestation. Through the ongoing analysis of varied patient data, including electronic health records, postoperative imaging, and physiological signals, AI has the capacity to enhance recovery outcomes, reduce hospital durations, and assist doctors in making prompt and educated decisions. This section emphasizes recent research demonstrating the application of AI in postoperative monitoring within GI treatment. As summarized in Table 4, these approaches show strong potential to enhance early detection of complications, minimize overtreatment, and support more precise, personalized postoperative interventions. From hospital discharge prediction to lesion identification and treatment response modeling, AI systems demonstrate strong performance across varied clinical targets. However, challenges such as external validation, interpretability, and integration into clinical workflows remain critical for translating these systems into routine use. Nevertheless, these studies collectively illustrate how AI can serve as a powerful ally in the postoperative management for GI patients.

| Ref. | Complication targeted | AI method/model | Timing of prediction | Data source/type | Performance metrics | Clinical utility |

| van de Sande et al[31], 2022 | Need for hospital specific interventions (e.g., reoperation, radiological intervention, IV antibiotics) | Random forest (4 variants tested) | After second postoperative day | EHR data from 3 non-academic hospitals; 18 perioperative variables (e.g., age, BMI, ASA, meds, surgery time) | AUROC: 0.83, sensitivity: 77.9%, specificity: 79.2%, PPV: 61.6%, NPV: 89.3% | Supports early safe discharge and capacity management |

| Choi et al[32], 2024 | Missed small bowel lesions in negative capsule endoscopy | CNN | After initial human reading of SBCE videos | 103 negative SBCE videos retrospectively reanalyzed; images from two academic hospitals | CNN detected additional lesions in 61.2% of cases; model had > 96% accuracy in prior study (AUROC = 0.9957) | Reduces diagnostic oversight; changed diagnosis in 10.3% |

| Blum et al[18], 2024 | Choledocholithiasis | Logistic regression, random forest, XGBoost, KNN, ensemble | Before MRCP, using pre intervention data | Retrospective data from 222 patients (clinical, biochemical, imaging variables) from Royal Hobart Hospital | Ensemble & random forest model (accuracy: 0.81, AUROC: 0.83, sensitivity: 0.94, specificity: 0.69, F1: 0.82) | Avoids unnecessary MRCP, triages patients for ERCP |

| Haak et al[33], 2022 | Incomplete response after CRT in rectal cancer | CNNs (EfficientNet B2, Xception, etc.); FFN for clinical data; combined model | After chemoradiation, pre surgery | 226 patients; 731 endoscopic images; clinical features from single institute retrospective cohort | EfficientNet B2-AUC: 0.83, accuracy: 0.75, sensitivity: 0.77, specificity: 0.75, PPV: 0.74, NPV: 0.77 | Identifies candidates for non-surgical follow up |

| Noar and Khan[34], 2023 | GP | AI derived GMAT threshold using multivariate regression | Pre-treatment (based on GMA from EGG + WLST) | 30 patients with GP; GMA via EGG; WLST; gastric emptying tests; symptom scores (GCSI DD, Leeds) | Sensitivity: 96%, specificity: 75%, accuracy: 93%, AUC: 0.95 for GMAT ≥ 0.59 | Guides selection for balloon dilation; personalized therapy |

AI-enhanced endoscopic systems are rapidly transforming the landscape of GI cancer diagnosis and surveillance. By enabling real time lesion detection, precise localization, and classification, these systems enhance diagnostic accuracy and standardize quality control in upper and lower GI endoscopy. Table 5 highlights a wide range of studies that apply deep learning algorithms, object detection models, and reinforcement learning in various endoscopic modalities such as white light endoscopy, capsule endoscopy, and endoscopic ultrasonography to support the detection and management of GI neoplasia. From real time detection and documentation auditing to risk stratification and capsule reading automation, these systems demonstrate exceptional performance. Notably, many models now match or exceed expert level accuracy, particularly in detecting subtle lesions or enhancing procedural completeness. However, continued efforts in prospective validation, generalizability across populations, and clinical integration are necessary to ensure routine use. Nevertheless, AI-assisted endoscopy is poised to become a standard in GI cancer diagnosis and surveillance.

| Ref. | Cancer type/lesion | Endoscopic modality | AI method/model | Task/objective | Dataset size/source | Performance metrics | Clinical relevance/impact |

| Messmann et al[35], 2022 | BERN | Upper GI endoscopy | AI assisted deep learning systems | Real time detection and localization of Barrett’s neoplasia | Meta analyzer and real time work (n > 1000 images) | Sensitivity: 83.7%-95.4%, accuracy: 88%-96% | Improves detection of subtle lesions; supports targeted biopsies over Seattle protocol |

| Choi et al[36], 2022 | EGD | CNN (squeeze and excitation network) | Classification of anatomical landmarks and completeness of photo documentation | 2599 images from 250 EGD procedures (Korea University Hospital) | Landmark classification: Accuracy 97.58%, sensitivity 97.42%, specificity 99.66%; completeness detection: Accuracy 89.20%, specificity 100% | Enhances quality control in EGD by verifying complete anatomical documentation automatically | |

| Inaba et al[37], 2024 | Colonoscopy (preparation phase) | MobileNetV3 based CNN (smartphone app) | AI based stool image classification to assess bowel preparation quality | 1689 images from 121 patients; 106 patient prospective validation | Accuracy: 90.2% (grade 1), 65.0% (grade 2), 89.3% (grade 3); BBPS ≥ 6 in 99.0% of app users | Improved bowel prep monitoring; 100% cecal intubation; reduced burden on patients and nurses | |

| Zhang et al[14], 2023 | Suspected choledocholithiasis | Not applicable (pre-endoscopy prediction) | ModelArts AI platform (Huawei); 7 machine learning models also tested | Predictive classification of CBD stones before cholecystectomy | 1199 patients with symptomatic gallstones; retrospective, single center | ModelArts AI: Accuracy 0.97, recall 097, precision 0.971, F1 score 0.97 | May outperform guideline based risk stratification; reduces unnecessary ERCP |

| Wu et al[38], 2021 | EGC | EGD | ENDOANGEL system (CNNs + deep reinforcement learning) | Real time monitoring of blind spots and detection of EGC | 1050 patients in multicenter RCT; 196 gastric lesions biopsied | Accuracy: 84.7%, sensitivity: 100%, specificity: 84.3% | Reduced blind spots, improved EGD quality, potential for real time EGC detection in clinical setting |

| Rondonotti et al[39], 2023 | DRSPs ≤ 5 mm | Colonoscopy with blue light imaging | CAD EYE (Fujifilm, Tokyo, Japan), CNN based real time system | Optical diagnosis to support “resect and discard” strategy | 596 DRSPs in 389 patients, 4 center prospective study (Italy) | NPV: 91.0%, sensitivity: 88.6%, specificity: 88.1%, accuracy: 88.4% | Meets ASGE PIVI thresholds; may enable safe omission of histology in DRSPs, especially beneficial for nonexperts |

| Koh et al[40], 2023 | Colonic adenomas including SSA | Colonoscopy | GI Genius™ (CADe system, Medtronic, MN, United States) | Real time detection of colonic polyps and ADR improvement | 298 colonoscopies; 487 AI “hits”; 250 polyps removed | Post AI ADR: 30.4% vs baseline 243% (P = 0.02); SSA rate: 5.6% | Enhanced ADR even in experienced endoscopists; improved SSA detection; supports AI use in routine colonoscopy |

| Yuan et al[41], 2022 | Gastric lesions (EGC, AGC, SMT, polyp, PU, erosion) | White light endoscopy | YOLO based DCNN model | Multiclass diagnosis of six gastric lesions + lesion free mucosa | 31388 images (29809 train/1579 test) from 9443 patients | Overall accuracy: 85.7%; EGC: Sensitivity 59.2%, specificity 99.3%; AGC: Sensitivity 100%, specificity 98.1% | Comparable to senior endoscopists; improved diagnostic accuracy and efficiency; potential for real time support in diverse gastric lesion detection |

| Munir et al[42], 2024 | Not applicable (survey based assessment) | ChatGPT | Evaluation of AI responses to perioperative GI surgery questions | 1080 responses assessed by 45 surgeons | Majority graded “fair” or “good” (57.6%); highest “very good/excellent” rate for cholecystectomy (45.3%) | ChatGPT may aid in patient education, but only 20% deemed it accurate; limited utility in reducing message load | |

| Sudarevic et al[43], 2023 | Colorectal polyps | Colonoscopy | Poseidon system (EndoMind + waterjet based AI) | AI based in situ measurement of polyp size using waterjet as reference | 28 polyps in silicone model + 29 polyps in routine colonoscopies | Median error: Poseidon 7.4% (model), 7.7% (clinical); visual: 25.1%/22.1%; forceps: 20.0% | Significantly improved sizing accuracy; does not require additional tools; useful for clinical polyp surveillance and resection decisions |

| Tsuboi et al[44], 2020 | Small bowel angioectasia | Capsule endoscopy (PillCam SB2/SB3, Medtronic, MN, United States) | CNN (single shot multibox detector) | Automatic detection of angioectasia in CE images | 2237 training images, 10488 validation images (488 angioectasia, 10000 normal) | AUC: 0.998; sensitivity: 98.8%, specificity: 98.4%, PPV: 75.4%, NPV: 99.9% | Enables high accuracy detection of angioectasia; may reduce oversight and physician workload during capsule reading |

| Chang et al[45], 2022 | Upper GI endoscopy (EGD) | ResNeSt deep learning model | Evaluate photodocumentation completeness via anatomical classification | 15305 training images; 15723 test images from 472 EGD cases | Accuracy: 96.64% (deep learning model), Photodocumentation rate: 78% (esophagus duodenum), 53.8% (pharynx duodenum) | Enables automated auditing of image completeness; higher completeness linked to higher ADR; applicable for routine EGD quality control | |

| Hwang et al[46], 2021 | Small bowel hemorrhagic and ulcerative lesions | CE | VGGNet based CNN + Grad CAM | Classification and localization of hemorrhagic vs ulcerative lesions | 30224 abnormal + 30224 normal images (train); 5760 images (validation) | Combined model: Accuracy 96.83%, sensitivity 97.61%, specificity 96.04%, AUROC approximately 0.996 | Enhanced lesion localization without manual annotation; Grad CAM improves interpretability; supports efficient clinical CE analysis |

| Jazi et al[47], 2023 | Not applicable (survey + clinical scenarios) | ChatGPT 4 (LLM by OpenAI) | Assess alignment of ChatGPT 4 with expert opinions on bariatric surgery suitability and recommendations | 10 patient scenarios; 30 international bariatric surgeons | Expert match: 30%; ChatGPT 4 inconsistency: 40%; recommended surgery in 60% vs experts 90% | ChatGPT 4 showed limited alignment and inconsistency; suitable for education, but not yet reliable for clinical decision making | |

| Meinikheim et al[48], 2024 | BERN | Upper GI endoscopy (video based) | DeepLabV3+ with ResNet50 backbone (clinical decision support system) | Evaluate add on effect of AI on endoscopist performance in BERN detection | 96 videos from 72 patients; 51273 images (train); 22 endoscopists from 12 centers | AI alone: Sensitivity 92.2%, specificity 68.9%, accuracy 81.3%; nonexperts with AI: Sensitivity up from 69.8% to 78.0%, specificity up from 67.3% to 72.7% | AI significantly improved nonexperts’ diagnostic performance and confidence; comparable accuracy to experts; highlights human AI interaction dynamics |

| Ahmad et al[49], 2021 | Colonoscopy | Identify top research priorities for AI implementation in colonoscopy | 15 international experts from 9 countries; 3 Delphi rounds | Not performance focused; methodology scores used for consensus | Provides a structured framework to guide future AI implementation research in colonoscopy; emphasizes clinical trial design, data annotation, integration, and regulation | ||

| Lazaridis et al[50], 2021 | CE | Assess adherence to ESGE guidelines and future perspectives on CE use | 217 respondents from 47 countries via ESGE survey | Not model based; survey: 91% performed CE with appropriate indication; 84.1% classified findings as relevant/irrelevant | Highlights variation in guideline adherence; AI identified as top development priority (56.2%); suggests need for standardization and formal CE training | ||

| Tian et al[51], 2024 | EUS | CNN with attention module | Automatic identification of 14 standard BPS anatomical sites on EUS | 6230 training images (1812 patients), internal: 1569 images (47 patients), external: 85322 images (131 patients from 16 centers) | Sensitivity: 89.45%-99.92%, specificity: 93.35%-99.79%, accuracy (internal): 92.1%-100%, kappa: 0.84-0.98 | Outperforms beginners, comparable to experts; enables efficient, high quality anatomical identification in EUS; potential for training and standardization | |

| He et al[52], 2020 | Upper GI endoscopy (EGD) | CNN models (DenseNet 121, ResNet 50, VGG, etc.) | Automated classification of 11 anatomical sites for quality control and reporting | 3704 images from 211 routine EGD cases (Tianjin Medical University Hospital, Tianjin, China) | (DenseNet 121): Accuracy approximately 91.11%, F1 scores up to 94.92% for specific sites | Supports automated quality assurance in EGD via accurate site classification; aids report generation and completeness verification |

As AI continues to evolve within surgical practice, its applications in education and skill evaluation are becoming increasingly relevant. Rather than replacing educators, AI can serve as a scalable adjunct for performance assessment, decision making training, and guideline adherence. Table 6 summarizes recent studies exploring AI’s potential in surgical education, including the use of large language models (LLMs) like ChatGPT, as well as structured surgical training environments in robotics.

| Ref. | Surgical procedure/task | AI method/model | Assessment modality | Data type/source | Performance metrics | Educational outcome |

| Garfinkle et al[53], 2022 | Gastrointestinal and endoscopic surgery (priority setting) | Survey/Delphi | Survey data from SAGES member surgeons | Identified core needs: Video training, tech adoption | ||

| Huo et al[54], 2024 | Surgical decision making for GERD | ChatGPT 3.5/4, copilot, Google Bard, Perplexity AI | Prompt based guideline comparison | Standardized clinical vignettes based on SAGES guidelines | Surgeons (accuracy): Bard 6/7 (85.7%), ChatGPT 4 5/7 (71.4%); patients (accuracy): Bard 4/5 (80.0%), ChatGPT 4 3/5 (60.0%); children: Copilot & Bard 3/3 (100.0%) | Revealed inconsistencies in LLM advice; need for medical domain training |

| Huo et al[55], 2024 | Surgical management of GERD | Generic ChatGPT 4 vs customized GPT (GTS) | Prompt based guideline comparison | 60 surgeon cases and 40 patient cases based on SAGES and UEG EAES guidelines | GTS (custom GPT): 100% accuracy for both surgeons (60/60) and patients (40/40), generic GPT 4 66.7% (40/60) for surgeons, 47.5% (19/40) for patients | Demonstrated impact of domain customization in LLMs |

| Nasir et al[56], 2021 | Robotic rectal cancer surgery | No AI model used (robotics only) | RCTs, observational studies, registry data | Reduced conversion to open surgery (especially in obese/male patients); improved urogenital function; no difference in long term oncologic outcomes | Reinforced need for structured robotic training (e.g., EARCS) |

As illustrated in Table 6, LLMs holds promise in enhancing surgical education but also pose risks if implemented without domain adaptation. Customized models like the gastroesophageal reflux disease tool for surgery vastly outperformed generic ones, reinforcing the need for medical context-specific training data. Meanwhile, robotic surgery continues to drive a parallel need for structured training curricula, which may eventually be augmented by AI-powered assessment tools such as video analysis and simulation scoring. Ultimately, future directions should aim to integrate AI into both formative (training) and summative (certification) surgical education systems.

This systematic review aimed to assess the current role of AI in GI surgery and to investigate the future trajectory of the area. The evidence collected from the included studies unequivocally demonstrates that AI is no longer a mere futuristic concept; it is being employed to improve surgical planning, decision-making in the operating room, postoperative patient monitoring, and the training of future surgeons. During the preoperative phase, AI has demonstrated significant potential for enhancing risk assessment. Models like random forests and ensemble learning have consistently surpassed conventional clinical standards in predicting the probability of choledocholithiasis and lymph node metastases. These technologies possess significant potential for facilitating more individualized surgical planning and may assist in decreasin for superfluous diagnostic tests or treatments. Intraoperatively, AI is becoming a significant support tool, functioning as an additional set of eyes. Models utilizing convolutional neural networks, YOLO algorithms, and reinforcement learning are employed to recognize anatomical features in real time, thereby augmenting surgical precision and enhancing safety, especially in intricate or high-risk procedures. Although additional validation in real-world, multi-center environments is necessary, the results are encouraging. AI is assuming a progressively significant role in the postoperative phase. Multiple models have shown proficiency in properly forecasting problems, including hemorrhage or protracted wound healing, through the analysis of data derived from electronic health records, imaging, and physiological signals. These systems possess the capacity to facilitate earlier clinical intervention, expedite recovery, and reduce hospital stays. In endoscopy, AI has facilitated improved diagnosis of GI malignancies and greater procedural documentation. Automated technologies have resulted in increased adenoma detection rates and less variability among endoscopists. Capsule endoscopy has particularly benefited from AI’s capacity to swiftly and precisely analyze large quantities of image data. The application of AI in surgical education, however nascent, demonstrates potential. The advancement of LLMs, like ChatGPT, has created new opportunities for education and training. Although general-purpose models currently lack reliability for clinical decision-making, domain-specific LLMs trained on surgical guidelines exhibit superior performance and may ultimately offer customized feedback or explanations during surgical training. Significant obstacles persist. Numerous studies examined here lacked external validation or long-term follow-up data, and several were constrained to retrospective analyses or performed within a singular institution. Interpretability continues to be a significant issue; for AI technologies to achieve broad clinical acceptability, they must be transparent and comprehensible to physicians.

Our comprehensive synthesis suggests that AI-driven algorithms can meaningfully enhance GI surgical workflows by improving lesion detection rates, optimizing patient selection through more accurate risk models, and supporting real-time anatomic guidance. To translate these benefits into everyday practice, institutions must invest in staff training for AI interpretation, develop robust data governance and integration pipelines, and establish multidisciplinary teams that include surgeons and data scientists to oversee model validation and deployment.

Several methodological factors may limit the scope of our findings. First, by focusing solely on the WoS Core Collection, our search may have overlooked pertinent studies indexed in other bibliographic databases or published as conference proceedings and technical reports. While restricting our search to the “Surgery” category of the WoS Core Collection was intended to capture the most impactful GI surgery and AI publications, this methodological choice may have inadvertently overlooked pertinent studies indexed in adjacent disciplines, thereby introducing a degree of residual selection bias. Second, we did not conduct a formal grey literature search or include non-English language articles, which could introduce publication and language biases. Finally, heterogeneity in reporting standards across primary studies, particularly regarding external validation and performance metrics, constrained our ability to perform meta-analysis. Future reviews should consider broader database coverage, inclusion of unpublished and non-English sources, and standardized data extraction protocols to enhance completeness and reduce bias.

AI is rapidly transforming the practice of GI surgery and increasingly serving as a valuable and adaptable instrument in enhancing patient care, facilitating risk prediction prior to surgery, providing assistance during procedures, and monitoring recovery thereafter. Numerous systems examined in this study currently demonstrate performance comparable to, or occasionally superior to, that of seasoned physicians for specific jobs. However, for AI to integrate into routine surgical practice, several critical measures must be undertaken. These technologies must be trained on high-quality, diversified datasets. They must also be comprehensible and reliable for the medical teams utilizing them. Equally significant, they must seamlessly integrate with existing hospital systems without complicating operations. The objective is not to supplant surgeons, but to assist them by enhancing decision-making and increasing the safety and efficacy of surgical procedures. Through continuous collaboration among physicians, researchers, and developers, AI can serve as a dependable ally in both standard and intricate GI surgical procedures.

| 1. | Celotto F, Capelli G, Ferrari S, Scarpa M, Pucciarelli S, Spolverato G. Application and use of artificial intelligence in colorectal cancer surgery: where are we? Art Int Surg. 2024;4:348-363. [DOI] [Full Text] |

| 2. | Mosca V, Fuschillo G, Sciaudone G, Sahnan K, Selvaggi F, Pellino G. Use of artificial intelligence in total mesorectal excision in rectal cancer surgery: State of the art and perspectives. Artif Intell Gastroenterol. 2023;4:64-71. [RCA] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 1] [Reference Citation Analysis (3)] |

| 3. | Shao S, Zhao Y, Lu Q, Liu L, Mu L, Qin J. Artificial intelligence assists surgeons' decision-making of temporary ileostomy in patients with rectal cancer who have received anterior resection. Eur J Surg Oncol. 2023;49:433-439. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 14] [Reference Citation Analysis (0)] |

| 4. | Hossain I, Madani A, Laplante S. Machine learning perioperative applications in visceral surgery: a narrative review. Front Surg. 2024;11:1493779. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 1] [Reference Citation Analysis (0)] |

| 5. | Ichimasa K, Kudo SE, Mori Y, Misawa M, Matsudaira S, Kouyama Y, Baba T, Hidaka E, Wakamura K, Hayashi T, Kudo T, Ishigaki T, Yagawa Y, Nakamura H, Takeda K, Haji A, Hamatani S, Mori K, Ishida F, Miyachi H. Artificial intelligence may help in predicting the need for additional surgery after endoscopic resection of T1 colorectal cancer. Endoscopy. 2018;50:230-240. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 112] [Cited by in RCA: 111] [Article Influence: 15.9] [Reference Citation Analysis (0)] |

| 6. | Arredondo Montero J. From the mathematical model to the patient: The scientific and human aspects of artificial intelligence in gastrointestinal surgery. World J Gastrointest Surg. 2024;16:1517-1520. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 1] [Reference Citation Analysis (0)] |

| 7. | Sakamoto T, Goto T, Fujiogi M, Kawarai Lefor A. Machine learning in gastrointestinal surgery. Surg Today. 2022;52:995-1007. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 2] [Cited by in RCA: 15] [Article Influence: 3.8] [Reference Citation Analysis (0)] |

| 8. | Fried GM, Ortenzi M, Dayan D, Nizri E, Mirkin Y, Maril S, Asselmann D, Wolf T. Surgical Intelligence Can Lead to Higher Adoption of Best Practices in Minimally Invasive Surgery. Ann Surg. 2024;280:525-534. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 3] [Cited by in RCA: 2] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 9. | Kinross JM, Mason SE, Mylonas G, Darzi A. Next-generation robotics in gastrointestinal surgery. Nat Rev Gastroenterol Hepatol. 2020;17:430-440. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 20] [Cited by in RCA: 34] [Article Influence: 6.8] [Reference Citation Analysis (0)] |

| 10. | Brunner S, Müller DT, Eckhoff JA, Reisewitz A, Schiffmann LM, Schröder W, Schmidt T, Bruns CJ, Fuchs HF. Innovative Operationsroboter und Operationstechnik für den Einsatz am oberen Gastrointestinaltrakt. Onkologie. 2023;29:506-514. [DOI] [Full Text] |

| 11. | Avram MF, Lazăr DC, Mariş MI, Olariu S. Artificial intelligence in improving the outcome of surgical treatment in colorectal cancer. Front Oncol. 2023;13:1116761. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 2] [Cited by in RCA: 5] [Article Influence: 2.5] [Reference Citation Analysis (0)] |

| 12. | Fuentes SMS, Chávez LAF, López EMM, Cardona CDC, Goti LLM. The impact of artificial intelligence in general surgery: enhancing precision, efficiency, and outcomes. Int J Res Med Sci. 2025;13:293-297. [DOI] [Full Text] |

| 13. | Galvis-García E, Vega-González FJ, Emura F, Teramoto-Matsubara Ó, Sánchez-Robles JC, Rodríguez-Vanegas G, Sobrino-Cossío S. Inteligencia artificial en la colonoscopia de tamizaje y la disminución del error. Cir Cir. 2023;91:411-421. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 1] [Article Influence: 0.5] [Reference Citation Analysis (0)] |

| 14. | Zhang H, Gao J, Sun Z, Zhang Q, Qi B, Jiang X, Li S, Shang D. Diagnostic accuracy of updated risk assessment criteria and development of novel computational prediction models for patients with suspected choledocholithiasis. Surg Endosc. 2023;37:7348-7357. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 3] [Reference Citation Analysis (0)] |

| 15. | Ahmad OF, González-Bueno Puyal J, Brandao P, Kader R, Abbasi F, Hussein M, Haidry RJ, Toth D, Mountney P, Seward E, Vega R, Stoyanov D, Lovat LB. Performance of artificial intelligence for detection of subtle and advanced colorectal neoplasia. Dig Endosc. 2022;34:862-869. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 19] [Cited by in RCA: 17] [Article Influence: 5.7] [Reference Citation Analysis (1)] |

| 16. | Lei II, Tompkins K, White E, Watson A, Parsons N, Noufaily A, Segui S, Wenzek H, Badreldin R, Conlin A, Arasaradnam RP. Study of capsule endoscopy delivery at scale through enhanced artificial intelligence-enabled analysis (the CESCAIL study). Colorectal Dis. 2023;25:1498-1505. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 9] [Reference Citation Analysis (0)] |

| 17. | Eckhoff JA, Ban Y, Rosman G, Müller DT, Hashimoto DA, Witkowski E, Babic B, Rus D, Bruns C, Fuchs HF, Meireles O. TEsoNet: knowledge transfer in surgical phase recognition from laparoscopic sleeve gastrectomy to the laparoscopic part of Ivor-Lewis esophagectomy. Surg Endosc. 2023;37:4040-4053. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 5] [Cited by in RCA: 8] [Article Influence: 4.0] [Reference Citation Analysis (0)] |

| 18. | Blum J, Hunn S, Smith J, Chan FY, Turner R. Using artificial intelligence to predict choledocholithiasis: can machine learning models abate the use of MRCP in patients with biliary dysfunction? ANZ J Surg. 2024;94:1260-1265. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 4] [Article Influence: 4.0] [Reference Citation Analysis (0)] |

| 19. | Axon ATR. Fifty years of digestive endoscopy: Successes, setbacks, solutions and the future. Dig Endosc. 2020;32:290-297. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 7] [Cited by in RCA: 12] [Article Influence: 2.4] [Reference Citation Analysis (0)] |

| 20. | Hsu JL, Chen KA, Butler LR, Bahraini A, Kapadia MR, Gomez SM, Farrell TM. Application of machine learning to predict postoperative gastrointestinal bleed in bariatric surgery. Surg Endosc. 2023;37:7121-7127. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 11] [Cited by in RCA: 11] [Article Influence: 5.5] [Reference Citation Analysis (0)] |

| 21. | Athanasiadis DI, Makhecha K, Blundell N, Mizota T, Anderson-Montoya B, Fanelli RD, Scholz S, Vazquez R, Gill S, Stefanidis D; SAGES Safe Cholecystectomy Task Force. How Accurate Are Surgeons at Assessing the Quality of Their Critical View of Safety During Laparoscopic Cholecystectomy? J Surg Res. 2025;305:36-40. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 2] [Reference Citation Analysis (0)] |

| 22. | Bisschops R, East JE, Hassan C, Hazewinkel Y, Kamiński MF, Neumann H, Pellisé M, Antonelli G, Bustamante Balen M, Coron E, Cortas G, Iacucci M, Yuichi M, Longcroft-Wheaton G, Mouzyka S, Pilonis N, Puig I, van Hooft JE, Dekker E. Advanced imaging for detection and differentiation of colorectal neoplasia: European Society of Gastrointestinal Endoscopy (ESGE) Guideline - Update 2019. Endoscopy. 2019;51:1155-1179. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 152] [Cited by in RCA: 232] [Article Influence: 38.7] [Reference Citation Analysis (1)] |

| 23. | Han F, Zhong G, Zhi S, Han N, Jiang Y, Tan J, Zhong L, Zhou S. Artificial Intelligence Recognition System of Pelvic Autonomic Nerve During Total Mesorectal Excision. Dis Colon Rectum. 2025;68:308-315. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 1] [Reference Citation Analysis (0)] |

| 24. | Sato K, Fujita T, Matsuzaki H, Takeshita N, Fujiwara H, Mitsunaga S, Kojima T, Mori K, Daiko H. Real-time detection of the recurrent laryngeal nerve in thoracoscopic esophagectomy using artificial intelligence. Surg Endosc. 2022;36:5531-5539. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 15] [Reference Citation Analysis (0)] |

| 25. | Niikura R, Aoki T, Shichijo S, Yamada A, Kawahara T, Kato Y, Hirata Y, Hayakawa Y, Suzuki N, Ochi M, Hirasawa T, Tada T, Kawai T, Koike K. Artificial intelligence versus expert endoscopists for diagnosis of gastric cancer in patients who have undergone upper gastrointestinal endoscopy. Endoscopy. 2022;54:780-784. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 36] [Cited by in RCA: 33] [Article Influence: 11.0] [Reference Citation Analysis (0)] |

| 26. | Yang X, Wang H, Dong Q, Xu Y, Liu H, Ma X, Yan J, Li Q, Yang C, Li X. An artificial intelligence system for distinguishing between gastrointestinal stromal tumors and leiomyomas using endoscopic ultrasonography. Endoscopy. 2022;54:251-261. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 34] [Cited by in RCA: 33] [Article Influence: 11.0] [Reference Citation Analysis (0)] |

| 27. | Schnelldorfer T, Castro J, Goldar-Najafi A, Liu L. Development of a Deep Learning System for Intraoperative Identification of Cancer Metastases. Ann Surg. 2024;280:1006-1013. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 3] [Article Influence: 3.0] [Reference Citation Analysis (0)] |

| 28. | Guo L, Gong H, Wang Q, Zhang Q, Tong H, Li J, Lei X, Xiao X, Li C, Jiang J, Hu B, Song J, Tang C, Huang Z. Detection of multiple lesions of gastrointestinal tract for endoscopy using artificial intelligence model: a pilot study. Surg Endosc. 2021;35:6532-6538. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 12] [Cited by in RCA: 12] [Article Influence: 3.0] [Reference Citation Analysis (0)] |

| 29. | Houwen BBSL, Hassan C, Coupé VMH, Greuter MJE, Hazewinkel Y, Vleugels JLA, Antonelli G, Bustamante-Balén M, Coron E, Cortas GA, Dinis-Ribeiro M, Dobru DE, East JE, Iacucci M, Jover R, Kuvaev R, Neumann H, Pellisé M, Puig I, Rutter MD, Saunders B, Tate DJ, Mori Y, Longcroft-Wheaton G, Bisschops R, Dekker E. Definition of competence standards for optical diagnosis of diminutive colorectal polyps: European Society of Gastrointestinal Endoscopy (ESGE) Position Statement. Endoscopy. 2022;54:88-99. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 10] [Cited by in RCA: 54] [Article Influence: 18.0] [Reference Citation Analysis (0)] |

| 30. | Tatar OC, Çubukçu A. Surgical Insight-guided Deep Learning for Colorectal Lesion Management. Surg Laparosc Endosc Percutan Tech. 2024;34:559-565. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 1] [Reference Citation Analysis (0)] |

| 31. | van de Sande D, van Genderen ME, Verhoef C, Huiskens J, Gommers D, van Unen E, Schasfoort RA, Schepers J, van Bommel J, Grünhagen DJ. Optimizing discharge after major surgery using an artificial intelligence-based decision support tool (DESIRE): An external validation study. Surgery. 2022;172:663-669. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 10] [Reference Citation Analysis (0)] |

| 32. | Choi KS, Park D, Kim JS, Cheung DY, Lee BI, Cho YS, Kim JI, Lee S, Lee HH. Deep learning in negative small-bowel capsule endoscopy improves small-bowel lesion detection and diagnostic yield. Dig Endosc. 2024;36:437-445. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 2] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 33. | Haak HE, Gao X, Maas M, Waktola S, Benson S, Beets-Tan RGH, Beets GL, van Leerdam M, Melenhorst J. The use of deep learning on endoscopic images to assess the response of rectal cancer after chemoradiation. Surg Endosc. 2022;36:3592-3600. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 1] [Cited by in RCA: 8] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 34. | Noar M, Khan S. Gastric myoelectrical activity based AI-derived threshold predicts resolution of gastroparesis post-pyloric balloon dilation. Surg Endosc. 2023;37:1789-1798. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 2] [Cited by in RCA: 4] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 35. | Messmann H, Bisschops R, Antonelli G, Libânio D, Sinonquel P, Abdelrahim M, Ahmad OF, Areia M, Bergman JJGHM, Bhandari P, Boskoski I, Dekker E, Domagk D, Ebigbo A, Eelbode T, Eliakim R, Häfner M, Haidry RJ, Jover R, Kaminski MF, Kuvaev R, Mori Y, Palazzo M, Repici A, Rondonotti E, Rutter MD, Saito Y, Sharma P, Spada C, Spadaccini M, Veitch A, Gralnek IM, Hassan C, Dinis-Ribeiro M. Expected value of artificial intelligence in gastrointestinal endoscopy: European Society of Gastrointestinal Endoscopy (ESGE) Position Statement. Endoscopy. 2022;54:1211-1231. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 83] [Cited by in RCA: 72] [Article Influence: 24.0] [Reference Citation Analysis (0)] |

| 36. | Choi SJ, Khan MA, Choi HS, Choo J, Lee JM, Kwon S, Keum B, Chun HJ. Development of artificial intelligence system for quality control of photo documentation in esophagogastroduodenoscopy. Surg Endosc. 2022;36:57-65. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 9] [Cited by in RCA: 27] [Article Influence: 6.8] [Reference Citation Analysis (0)] |

| 37. | Inaba A, Shinmura K, Matsuzaki H, Takeshita N, Wakabayashi M, Sunakawa H, Nakajo K, Murano T, Kadota T, Ikematsu H, Yano T. Smartphone application for artificial intelligence-based evaluation of stool state during bowel preparation before colonoscopy. Dig Endosc. 2024;36:1338-1346. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 4] [Reference Citation Analysis (0)] |

| 38. | Wu L, He X, Liu M, Xie H, An P, Zhang J, Zhang H, Ai Y, Tong Q, Guo M, Huang M, Ge C, Yang Z, Yuan J, Liu J, Zhou W, Jiang X, Huang X, Mu G, Wan X, Li Y, Wang H, Wang Y, Zhang H, Chen D, Gong D, Wang J, Huang L, Li J, Yao L, Zhu Y, Yu H. Evaluation of the effects of an artificial intelligence system on endoscopy quality and preliminary testing of its performance in detecting early gastric cancer: a randomized controlled trial. Endoscopy. 2021;53:1199-1207. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 112] [Cited by in RCA: 102] [Article Influence: 25.5] [Reference Citation Analysis (0)] |

| 39. | Rondonotti E, Hassan C, Tamanini G, Antonelli G, Andrisani G, Leonetti G, Paggi S, Amato A, Scardino G, Di Paolo D, Mandelli G, Lenoci N, Terreni N, Andrealli A, Maselli R, Spadaccini M, Galtieri PA, Correale L, Repici A, Di Matteo FM, Ambrosiani L, Filippi E, Sharma P, Radaelli F. Artificial intelligence-assisted optical diagnosis for the resect-and-discard strategy in clinical practice: the Artificial intelligence BLI Characterization (ABC) study. Endoscopy. 2023;55:14-22. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 7] [Cited by in RCA: 72] [Article Influence: 36.0] [Reference Citation Analysis (0)] |

| 40. | Koh FH, Ladlad J; SKH Endoscopy Centre, Teo EK, Lin CL, Foo FJ. Real-time artificial intelligence (AI)-aided endoscopy improves adenoma detection rates even in experienced endoscopists: a cohort study in Singapore. Surg Endosc. 2023;37:165-171. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 19] [Reference Citation Analysis (0)] |

| 41. | Yuan XL, Zhou Y, Liu W, Luo Q, Zeng XH, Yi Z, Hu B. Artificial intelligence for diagnosing gastric lesions under white-light endoscopy. Surg Endosc. 2022;36:9444-9453. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 14] [Cited by in RCA: 5] [Article Influence: 1.7] [Reference Citation Analysis (0)] |

| 42. | Munir MM, Endo Y, Ejaz A, Dillhoff M, Cloyd JM, Pawlik TM. Online artificial intelligence platforms and their applicability to gastrointestinal surgical operations. J Gastrointest Surg. 2024;28:64-69. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 8] [Reference Citation Analysis (0)] |

| 43. | Sudarevic B, Sodmann P, Kafetzis I, Troya J, Lux TJ, Saßmannshausen Z, Herlod K, Schmidt SA, Brand M, Schöttker K, Zoller WG, Meining A, Hann A. Artificial intelligence-based polyp size measurement in gastrointestinal endoscopy using the auxiliary waterjet as a reference. Endoscopy. 2023;55:871-876. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 21] [Reference Citation Analysis (0)] |

| 44. | Tsuboi A, Oka S, Aoyama K, Saito H, Aoki T, Yamada A, Matsuda T, Fujishiro M, Ishihara S, Nakahori M, Koike K, Tanaka S, Tada T. Artificial intelligence using a convolutional neural network for automatic detection of small-bowel angioectasia in capsule endoscopy images. Dig Endosc. 2020;32:382-390. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 75] [Cited by in RCA: 103] [Article Influence: 20.6] [Reference Citation Analysis (0)] |

| 45. | Chang YY, Yen HH, Li PC, Chang RF, Yang CW, Chen YY, Chang WY. Upper endoscopy photodocumentation quality evaluation with novel deep learning system. Dig Endosc. 2022;34:994-1001. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 5] [Cited by in RCA: 10] [Article Influence: 3.3] [Reference Citation Analysis (0)] |

| 46. | Hwang Y, Lee HH, Park C, Tama BA, Kim JS, Cheung DY, Chung WC, Cho YS, Lee KM, Choi MG, Lee S, Lee BI. Improved classification and localization approach to small bowel capsule endoscopy using convolutional neural network. Dig Endosc. 2021;33:598-607. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 42] [Cited by in RCA: 36] [Article Influence: 9.0] [Reference Citation Analysis (0)] |

| 47. | Jazi AHD, Mahjoubi M, Shahabi S, Alqahtani AR, Haddad A, Pazouki A, Prasad A, Safadi BY, Chiappetta S, Taskin HE, Billy HT, Kasama K, Mahawar K, Gawdat K, Rheinwalt KP, Miller KA, Kow L, Neto MG, Yang W, Palermo M, Ghanem OM, Lainas P, Peterli R, Kassir R, Puy RV, Da Silva Ribeiro RJ, Verboonen S, Pintar T, Shabbir A, Musella M, Kermansaravi M. Bariatric Evaluation Through AI: a Survey of Expert Opinions Versus ChatGPT-4 (BETA-SEOV). Obes Surg. 2023;33:3971-3980. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 12] [Cited by in RCA: 11] [Article Influence: 5.5] [Reference Citation Analysis (0)] |

| 48. | Meinikheim M, Mendel R, Palm C, Probst A, Muzalyova A, Scheppach MW, Nagl S, Schnoy E, Römmele C, Schulz DAH, Schlottmann J, Prinz F, Rauber D, Rückert T, Matsumura T, Fernández-Esparrach G, Parsa N, Byrne MF, Messmann H, Ebigbo A. Influence of artificial intelligence on the diagnostic performance of endoscopists in the assessment of Barrett's esophagus: a tandem randomized and video trial. Endoscopy. 2024;56:641-649. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 12] [Reference Citation Analysis (0)] |

| 49. | Ahmad OF, Mori Y, Misawa M, Kudo SE, Anderson JT, Bernal J, Berzin TM, Bisschops R, Byrne MF, Chen PJ, East JE, Eelbode T, Elson DS, Gurudu SR, Histace A, Karnes WE, Repici A, Singh R, Valdastri P, Wallace MB, Wang P, Stoyanov D, Lovat LB. Establishing key research questions for the implementation of artificial intelligence in colonoscopy: a modified Delphi method. Endoscopy. 2021;53:893-901. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 48] [Cited by in RCA: 45] [Article Influence: 11.3] [Reference Citation Analysis (0)] |

| 50. | Lazaridis LD, Tziatzios G, Toth E, Beaumont H, Dray X, Eliakim R, Ellul P, Fernandez-Urien I, Keuchel M, Panter S, Rondonotti E, Rosa B, Spada C, Jover R, Bhandari P, Triantafyllou K, Koulaouzidis A; ESGE Research Committee Small-Bowel Working Group. Implementation of European Society of Gastrointestinal Endoscopy (ESGE) recommendations for small-bowel capsule endoscopy into clinical practice: Results of an official ESGE survey. Endoscopy. 2021;53:970-980. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 9] [Cited by in RCA: 13] [Article Influence: 3.3] [Reference Citation Analysis (0)] |

| 51. | Tian S, Shi H, Chen W, Li S, Han C, Du F, Wang W, Wen H, Lei Y, Deng L, Tang J, Zhang J, Lin J, Shi L, Ning B, Zhao K, Miao J, Wang G, Hou H, Huang X, Kong W, Jin X, Ding Z, Lin R. Artificial intelligence-based diagnosis of standard endoscopic ultrasonography scanning sites in the biliopancreatic system: a multicenter retrospective study. Int J Surg. 2024;110:1637-1644. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 4] [Reference Citation Analysis (0)] |

| 52. | He Q, Bano S, Ahmad OF, Yang B, Chen X, Valdastri P, Lovat LB, Stoyanov D, Zuo S. Deep learning-based anatomical site classification for upper gastrointestinal endoscopy. Int J Comput Assist Radiol Surg. 2020;15:1085-1094. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 16] [Cited by in RCA: 27] [Article Influence: 5.4] [Reference Citation Analysis (0)] |

| 53. | Garfinkle R, Petersen RP, DuCoin C, Altieri MS, Aggarwal R, Pryor A, Zevin B. Consensus priority research questions in gastrointestinal and endoscopic surgery in the year 2020: results of a SAGES Delphi study. Surg Endosc. 2022;36:6688-6695. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 4] [Reference Citation Analysis (0)] |

| 54. | Huo B, Calabrese E, Sylla P, Kumar S, Ignacio RC, Oviedo R, Hassan I, Slater BJ, Kaiser A, Walsh DS, Vosburg W. The performance of artificial intelligence large language model-linked chatbots in surgical decision-making for gastroesophageal reflux disease. Surg Endosc. 2024;38:2320-2330. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 8] [Cited by in RCA: 9] [Article Influence: 9.0] [Reference Citation Analysis (0)] |

| 55. | Huo B, Marfo N, Sylla P, Calabrese E, Kumar S, Slater BJ, Walsh DS, Vosburg W. Clinical artificial intelligence: teaching a large language model to generate recommendations that align with guidelines for the surgical management of GERD. Surg Endosc. 2024;38:5668-5677. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 6] [Reference Citation Analysis (0)] |

| 56. | Nasir I, Mureb A, Aliozo CC, Abunada MH, Parvaiz A. State of the art in robotic rectal surgery: marginal gains worth the pain? Updates Surg. 2021;73:1073-1079. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 3] [Reference Citation Analysis (0)] |